Imagine a digital system that doesn’t wait for instructions but instead, understands your business goals, learns from real-time feedback, and takes independent actions to get the job done.

Read More

Healthcare teams today deal with growing patient data, complex workflows, and constant pressure to improve outcomes. AI EHR MVP development offers a practical way to turn an idea into a working product without building a full EHR system upfront. Instead, the focus is on solving one clear problem and building only what is needed to test it in real conditions.

This approach involves developing a minimum viable product (MVP) with essential EHR capabilities and selected AI features that support a specific workflow, such as patient data management or clinical support. AI is built into how the system works, not added later, which makes early design decisions more important.

For teams that do not have in-house expertise, working with a custom software development company helps translate healthcare requirements into a structured product without overbuilding or missing critical constraints.

The challenge is deciding what to include and what to leave out. Adding too many features can slow development, while the wrong AI use case can add complexity without real value. This is where teams often evaluate options, including top AI development companies in Florida, to understand how similar products are being approached and built.

In this blog, we break down how to approach AI-powered EHR MVP development, including how to define scope, structure the system, and move from idea to a deployable product.

Most healthcare teams don’t need a full EHR system to get started. What they need is something smaller that actually works in a real setting. That’s where AI EHR MVP development comes in. Instead of building everything at once, the idea is to start with a focused product that solves one clear problem and can be tested with real users.

This approach helps teams avoid spending months on features that may not be needed. In AI EHR MVP platform development, early decisions around data and AI matter a lot, so keeping the scope tight makes it easier to build, test, and adjust without major rework later.

An AI EHR MVP is a working product built around one specific use case.

For example, it might help with documenting patient notes, organizing records, or supporting a simple clinical decision. What matters is that it does one thing well and can be used in a real workflow.

At a basic level, it should have:

The goal is not to cover everything. It is to build something useful enough to learn from. This is where teams often bring in AI consulting services to make sure they are not overcomplicating things too early.

AI changes how the system handles data in many ways. In a regular EHR, data is entered, stored, and then viewed when needed. But when building an EHR MVP integrating AI, the system starts doing more with that data.

Instead of just storing information, it can process it to generate summaries, flag issues, or support decisions. This means the system needs to handle data a bit differently.

A few things change:

So, the product is no longer just a place to keep records. It becomes something that actively supports how work gets done.

Understanding this shift is important when building an EHR MVP integrating AI, because how you design the system early on will directly affect how useful and scalable the product becomes later.

A common mistake in AI EHR MVP development is starting with a list of features instead of a clear problem. That usually leads to products that look complete but are hard to use or don’t solve anything meaningful.

Pick one problem that shows up in day-to-day work and focus on that.

When teams are building an AI-Powered EHR MVP, this kind of focus helps keep the product grounded. It also makes it easier to test whether the idea actually works before expanding further.

Most healthcare tools fail not because they lack features, but because they don’t fit into how work actually happens.

Instead of asking “what should we build?”, it helps to ask:

When you map a workflow step by step, several patterns start to show. For example:

These are workflow gaps. When you build around workflows does a few important things:

This is also where teams often rely on AI integration services, not just to add AI, but to fit it into existing processes without disrupting how people work.

Get clarity on scope, workflows, and next steps in AI EHR MVP development.

Explore My MVP Plan

Not every problem is worth solving in an MVP. The goal is to find problems that are frequent, visible, and costly in terms of time or effort.

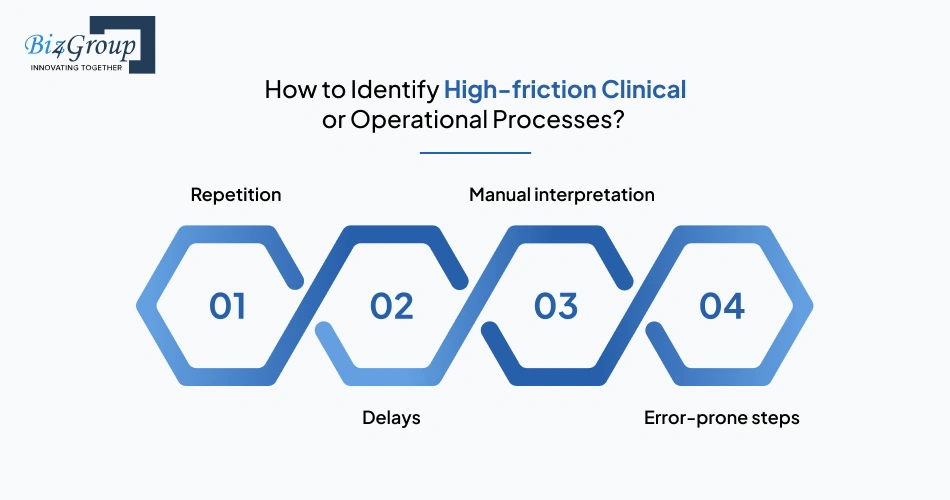

High-friction processes usually have a few clear signs:

Tasks that are done over and over again in the same way

Example: entering similar patient information across multiple forms

Steps where work pauses because someone needs to review or verify data

Example: waiting for documentation review before moving forward

Situations where people need to read, interpret, and summarize information

Example: going through patient history to make a decision

Places where mistakes happen due to manual entry or oversight

Example: missing or inconsistent patient data

Here’s how you can narrow it down:

These are the kinds of problems worth focusing on before you launch your AI EHR MVP, because they are easier to measure and validate.

Once the problem is clear, the next step is deciding what success looks like. Without this, it is easy to build something that works technically but does not deliver real value.

Good success criteria are simple, specific, and tied to the workflow you are improving.

Instead of vague goals like “improve efficiency,” define outcomes such as:

It also helps to think in short timeframes:

This clarity makes decision-making easier during development and after launch. It also helps avoid adding features that do not contribute to the core goal.

When done right, this step creates a strong foundation for building an AI-Powered EHR MVP, where every feature and AI component is tied back to a measurable outcome.

Focusing on a real problem, grounding it in actual workflows, and defining clear success criteria gives the MVP direction. It keeps the product practical, testable, and easier to improve as you move forward.

One of the most important decisions in AI EHR MVP development is choosing what to build first. Adding too many features can slow development and make the product harder to test, while too few can limit usefulness. The goal is to include only what is needed to support a real workflow and generate meaningful feedback.

A functional MVP does not need to cover every aspect of an EHR system. It only needs to support a specific workflow with enough structure to make it usable in practice.

Below is a simple breakdown of core features and why they matter:

|

Feature Area |

What It Includes |

Why It Matters in MVP |

|---|---|---|

|

Patient Data Management |

Create, store, and update patient records |

Forms the foundation of any EHR system |

|

Data Retrieval |

Search and access patient information quickly |

Enables real-time usage during workflows |

|

Basic Workflow Support |

Task-specific flows like documentation or review |

Keeps the MVP focused on a clear use case |

|

User Access Control |

Role-based access for users |

Ensures basic data security and usability |

|

Audit Logs |

Track changes and user actions |

Important for traceability and compliance readiness |

These features are not about completeness. They are about making sure the product works in a real setting without unnecessary complexity.

When teams approach AI electronic health record MVP development, keeping this foundation minimal helps avoid rework and keeps the system easier to extend later.

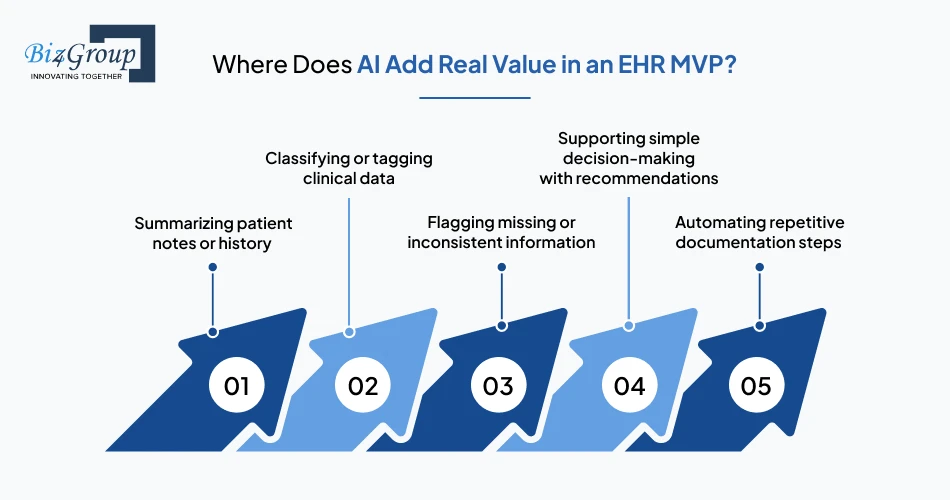

AI should not be added just to make the product seem advanced. It should support a specific task where manual effort, time, or inconsistency is already a problem.

Here are the common areas where AI adds value early:

What matters is not how many AI features are included, but whether they reduce effort or improve accuracy in a visible way. At this stage, teams often explore AI model development to define how inputs and outputs will work within a limited scope, rather than building complex systems too early.

In most cases, a single well-placed AI capability is more useful than multiple loosely connected ones.

Once features and AI use cases are identified, the next step is deciding what makes it into the MVP. A simple way to approach this is to evaluate each feature based on three questions:

Question 1: Does it directly support the core workflow?

Question 2: Can it be tested with real users in the MVP stage?

Question 3: Does it add measurable value within a short timeframe?

Based on the answers to these questions, features can be grouped into:

It is also helpful to consider constraints like data availability, integration effort, and compliance requirements.

Teams that follow this approach tend to move faster and avoid unnecessary iterations during MVP development for AI EHR.

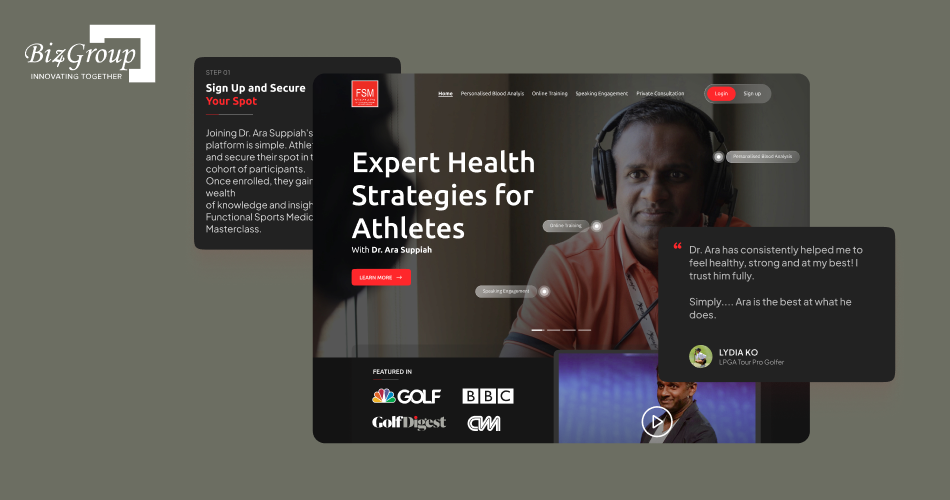

Portfolio Spotlight

Dr Ara is an AI-powered health platform that analyzes blood reports and translates them into personalized health insights, covering diet, sleep, and performance. It shows how structured patient data and AI can work together in a focused workflow, much like an MVP that validates real-world usability before expanding into a broader healthcare system.

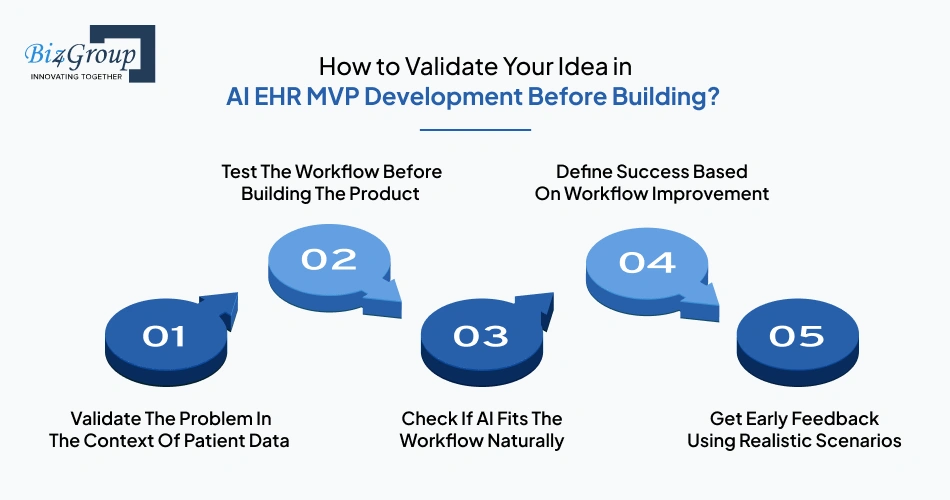

Before you start building, it helps to ask a simple but important question: will this idea actually work in a real clinical environment?

In AI EHR MVP development, validation is about how well your idea fits into workflows that depend on patient data, documentation, and decision-making. Getting this right early saves time and avoids building something that looks useful but is hard to use in practice.

It is not enough to know that a problem exists. You need to see how it shows up when patient data is being created, reviewed, or updated. For example, delays during documentation or inconsistencies in records often point to deeper workflow issues. This is where MVP development for EHR with AI should begin, grounded in how data is actually handled.

In healthcare, workflows are tightly connected to real situations, not ideal scenarios. Walk through how your solution would work step by step, using realistic patient cases. This helps you see whether the process feels natural or creates extra steps, especially when multiple roles interact with the same data.

AI should make working with patient data easier, not more complicated. Ask whether it reduces effort in documentation, improves how data is interpreted, or supports faster decisions. Some teams explore AI automation services at this stage to understand where AI adds value without forcing it into places where it is not needed.

Success should be tied to what changes in the workflow, not just what gets built. For example, faster documentation, fewer manual steps, or more consistent records are clear indicators. These outcomes help you understand if the MVP is actually solving the problem.

Even without a full product, you can show early versions or walk through scenarios with real users. What matters is seeing how they react when handling patient data or making decisions. Their feedback will quickly highlight gaps that are not obvious during planning.

Validation is what turns an idea into something worth building. When your problem, workflow, and expected outcomes are clear, you can move forward with more confidence as you build your AI-driven EHR MVP.

Reduce risk by building an EHR MVP integrating AI before committing to full development.

Validate My Idea

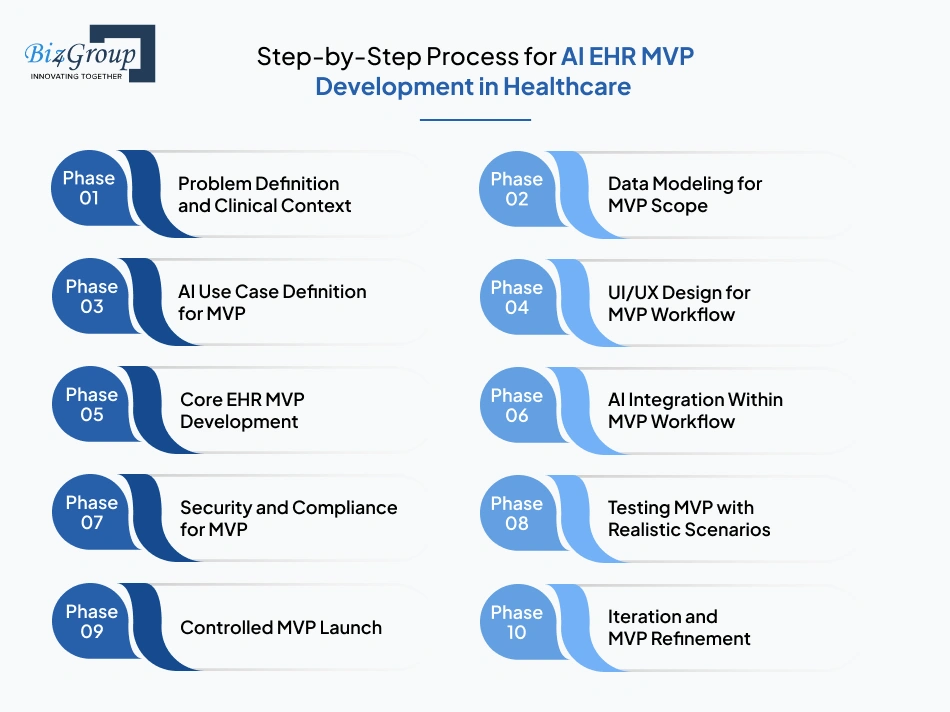

A typical AI EHR MVP development cycle in healthcare takes around 8 to 12 weeks, depending on scope, data complexity, and integrations. The goal is not to build a full system, but to move step by step toward an MVP that fits real clinical workflows and can be tested safely with actual usage scenarios.

Timeline: 1 week

This phase sets the direction for MVP development services by identifying a single workflow and understanding how patient data moves through it. The focus is on solving one clear problem instead of defining a broad system.

Timeline: 1–2 weeks

This phase focuses on structuring only the data needed for the MVP. It ensures the system can handle real scenarios while staying simple enough to build and test quickly.

This is typically where teams start thinking about how to create AI EHR MVP for patient data management without overcomplicating the data layer early on.

Timeline: 1 week

This phase identifies where AI fits into the workflow and what it is expected to do. The goal is to define a clear, limited role for AI within the MVP.

At this stage, teams often evaluate available AI solutions to build healthcare EHR MVP platforms to avoid building everything from scratch.

Timeline: 1–2 weeks

This phase focuses on designing how users will interact with the system during the MVP stage. The goal is to create simple, workflow-aligned UI/UX design that make patient data easy to access, update, and act on without adding unnecessary complexity.

At this stage, teams often focus on how to make AI EHR system MVP for healthcare businesses usable in real scenarios, ensuring that the interface supports speed and clarity rather than feature depth.

Also Read: Top 15 UI/UX Design Companies in USA (2026 Edition)

Timeline: 3–4 weeks

This phase focuses on building the base system required for the MVP. It ensures that patient data and workflows function reliably before adding AI components.

This is the phase where you effectively build AI electronic health record MVP for startups, even if AI itself is not fully integrated yet.

Timeline: 1–2 weeks

This phase integrates AI into specific steps within the workflow. The focus is on making AI outputs useful and usable in real scenarios.

This is also where many teams start thinking practically about who can build an AI EHR MVP platform with the right balance of healthcare and AI expertise.

Also Read: Top 12+ MVP Development Companies to Launch Your Startup in 2026

Timeline: 1 week

This phase prepares the MVP for real-world use by implementing basic security and compliance measures without slowing development.

Timeline: 1 week

This phase tests the MVP in conditions that reflect real usage. It focuses on how the system behaves when exposed to real workflows and imperfect data.

Timeline: 0.5–1 week

This phase introduces the MVP to a limited group of users. The goal is to observe real usage without exposing the system to large-scale risk.

Timeline: Ongoing

This phase improves the MVP based on real usage data. It focuses on refining workflows and AI outputs before expanding the product.

Following this phased approach is often the best way to develop AI EHR MVP for healthcare startups, especially when the focus stays on real workflows instead of feature expansion. It keeps the product grounded, testable, and easier to evolve.

System design is where many ideas either become practical or fall apart. In AI EHR MVP development, the system is not just storing patient data, it is also processing it to support workflows and generate outputs. This means the design needs to handle both traditional EHR functions and AI-driven behavior without becoming overly complex.

|

Layer |

What It Does |

What to Consider in MVP |

|---|---|---|

|

Data Ingestion Layer |

Captures patient data from inputs such as forms, devices, or integrations |

Keep inputs simple and structured enough to support the chosen workflow |

|

Data Storage Layer |

Stores patient records and related information |

Use a flexible structure that can support both structured and unstructured data |

|

Processing Layer |

Handles data transformation, validation, and preparation |

Ensure data is cleaned and organized before any AI processing |

|

AI Layer |

Generates outputs such as summaries, classifications, or alerts |

Limit to one clear use case to avoid unnecessary complexity |

|

Application Layer |

Interface where users interact with the system |

Design around real workflows, not generic dashboards |

|

Integration Layer |

Connects with external systems if required |

Keep integrations minimal at MVP stage to reduce dependency risks |

Each of these layers works together, but they do not need to be fully developed at the MVP stage. The focus should be on how data moves across the system and where decisions or actions are triggered. Teams often integrate AI into an app at specific points in the workflow rather than across the entire system to keep things manageable. This is especially relevant when teams aim to make AI EHR system MVP for healthcare businesses without overcomplicating early architecture.

A simple, layered design like this is often the best way to create AI EHR MVP for hospitals and clinics, because it keeps the system flexible, easier to test, and ready to scale without major redesign.

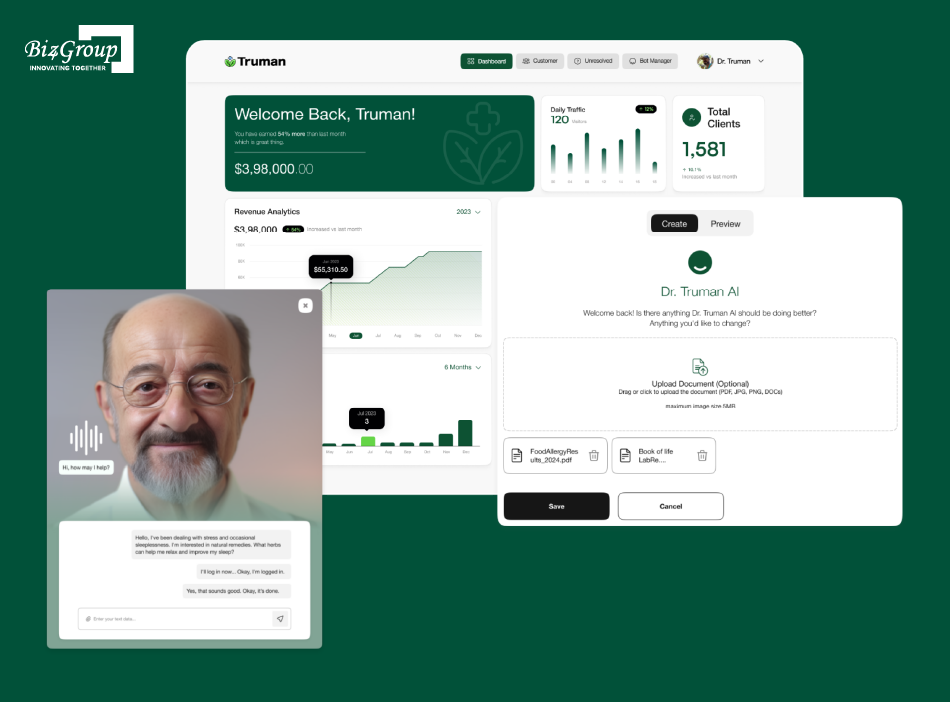

Portfolio Spotlight

Truman is an AI-driven wellness platform featuring a conversational AI avatar that provides personalized health guidance and recommendations based on user profiles. It highlights how AI can be embedded into user interactions, showing how MVPs can move beyond data storage into active engagement and decision support within healthcare experiences.

Focus on what matters with a structured approach to AI-powered EHR MVP development.

Define My MVP ScopeCompliance can feel like a heavy topic, especially at the MVP stage. But in AI EHR MVP development, you don’t need to solve everything at once. What matters is getting the basics right so patient data is handled safely and your system doesn’t need major changes later.

HIPAA is often treated like a checklist, but at a basic level, it’s about protecting patient data. Who can see it, how it is stored, and how it is shared all matter. When teams ask how can I build an AI EHR MVP for my healthcare product idea, this is usually where they realize compliance starts with simple, clear rules.

At the MVP stage, security does not need to be complex, but it does need to be intentional. Users should only see what they need, and patient data should be protected during storage and transfer. Teams working on enterprise AI solutions often set these rules early so they do not have to fix structural issues later.

Even if your MVP is small, it should not be isolated. Healthcare systems often need to exchange data, so it helps to structure your data in a way that can support standards like FHIR later. This does not mean building integrations now, but avoiding designs that block them.

Trying to cover every compliance detail early can slow things down. A more practical approach is to focus on what is essential now and expand later. This is often a key factor when teams evaluate which company can develop AI EHR MVP for healthcare businesses, as they need a balance between speed and responsibility.

Compliance at this stage is about being careful, not perfect. If the basics are in place, it becomes much easier to grow the product without running into bigger issues later.

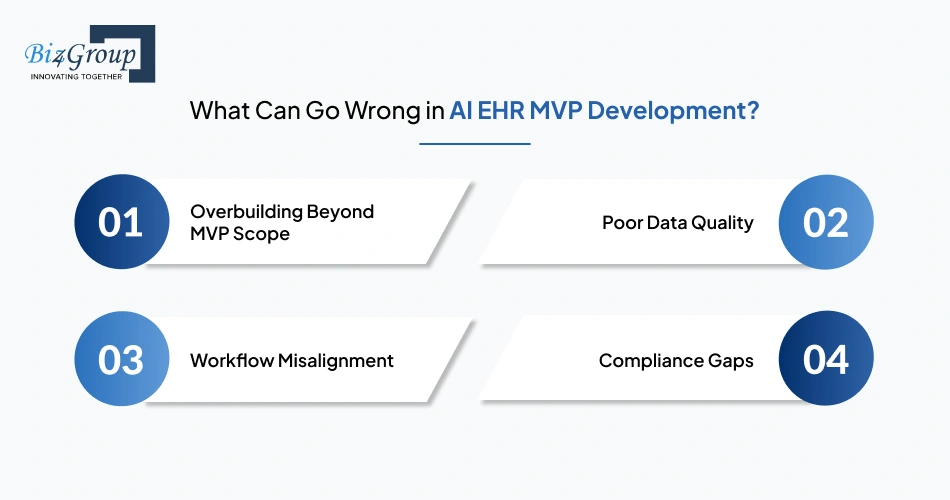

Even with a clear plan, things can go off track quickly in AI EHR MVP development. Most issues do not come from technology alone, but from early decisions around scope, data, workflows, and compliance. Catching these early makes a big difference.

|

Risk Area |

What It Looks Like |

Why It Matters |

|---|---|---|

|

Overbuilding Beyond MVP Scope |

Too many features added too early |

Slows development and makes testing harder |

|

Poor Data Quality |

Incomplete or inconsistent patient data |

Leads to unreliable AI outputs |

|

Workflow Misalignment |

Product does not fit real clinical processes |

Users avoid or work around the system |

|

Compliance Gaps |

Missing basic data protection or access controls |

Creates risk and limits real-world use |

These issues often surface when teams start figuring out how to build AI EHR MVP with clinical decision support features, especially because AI depends heavily on clean data and well-defined workflows. In many cases, even strong models, including those based on generative AI, fail when the underlying system is not structured properly.

In most cases, AI EHR MVP development takes around 8 to 12 weeks when the scope is clear and the workflow is well defined. The timeline is less about how fast a team can code, and more about how clearly the problem, data, and usage are understood from the start.

Not every MVP takes the same amount of time. The biggest difference usually comes from how much you are trying to build in the first version.

One workflow, limited data, and a single AI use case. These projects move faster because there are fewer decisions to make and fewer moving parts.

A bit more involved, with multiple roles and slightly more complex workflows. Coordination between different parts of the system starts to matter more here.

Multiple workflows, integrations, and heavier AI usage. These projects slow down because managing data and interactions becomes more difficult.

This is usually the point where teams start thinking about how to develop scalable AI EHR MVP for hospitals and clinics, since adding too much early can stretch timelines quickly.

Delays usually don’t happen because of coding. They happen when things are unclear or keep changing during development.

Teams working with a software development company in Florida often try to avoid these issues by locking the core workflow early and sticking to it.

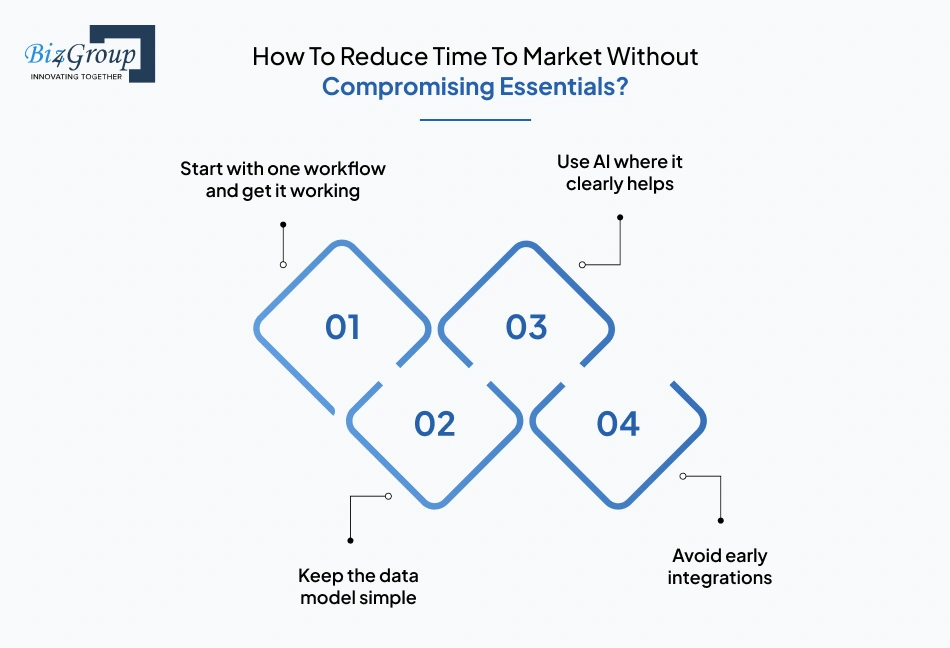

Moving faster is not about cutting corners. It is about staying focused on what actually matters in the MVP. Here’s all you need to know:

It is easier to build, test, and fix one workflow than manage several at once. Once that works, everything else becomes easier to add.

You don’t need to plan for every future case. A simple structure that supports your current workflow is enough to move forward.

If AI is not reducing effort or improving accuracy in a visible way, it probably does not belong in the MVP yet.

Connecting with other systems sounds useful, but it often slows things down. It is better to get the core product working first.

Some teams also look at business app development using AI to speed up parts of the build, but only after the core workflow is stable.

In the end, timelines stay predictable when decisions stay simple. When the scope is clear and tied to real workflows, it becomes much easier to move forward without delays, especially when creating an AI EHR MVP for patient records and automation that can grow over time.

Simplify your approach to MVP development for EHR with AI with the right priorities in place.

Get Expert GuidanceThe cost of building an MVP can vary quite a bit, but in most cases, AI EHR MVP development falls in the range of $30,000 to $100,000+. This is a ballpark figure, not a fixed price. The actual cost depends on how complex the workflow is, how much AI is involved, and how the system is designed.

|

Cost Component |

Estimated Range |

What Drives the Cost |

|---|---|---|

|

Scope & Workflow Complexity |

$5,000 – $15,000 |

Number of workflows, user roles, and overall feature scope |

|

Data Modeling & Handling |

$4,000 – $12,000 |

Structuring patient data and handling real-world data variations |

|

AI Integration |

$5,000 – $20,000 |

Type of AI use case, model complexity, and output requirements |

|

UI/UX Design |

$3,000 – $10,000 |

Number of screens and how closely design follows real workflows |

|

Development & Engineering |

$8,000 – $30,000 |

Core backend, frontend, and system build effort |

|

Security & Compliance |

$3,000 – $8,000 |

Basic safeguards for handling patient data securely |

|

Testing & Iteration |

$2,000 – $10,000 |

Fixes, refinements, and workflow validation before launch |

A simpler MVP with one workflow and limited AI will usually stay closer to the lower end of this range. As soon as you add more workflows, integrations, or advanced AI features, costs can increase quickly.

Some teams choose to hire AI developers early to handle specific parts of the system efficiently, especially when working with data-heavy features. Others keep the first version intentionally small to control both cost and complexity.

Teams that stay disciplined with scope tend to spend less and learn faster, especially when working with top AI EHR MVP development services for startups that focus on building usable MVPs instead of full-scale systems.

Choosing the right partner can shape how your product evolves from day one. In AI EHR MVP development, you’re not just hiring a team to build features, you’re working with people who will influence decisions around workflows, data handling, and AI usage. That’s why the choice matters early.

Before anything else, check whether the team can handle both sides of the product, EHR systems and AI. A few things usually stand out quickly:

If these pieces are missing, things tend to break later when systems become more complex.

This is where many teams look similar on paper but perform very differently. Remember to ask these questions for better clarity:

In AI-powered EHR MVP development, teams that understand both healthcare constraints and AI limitations tend to make better decisions early on.

Instead of focusing only on what they’ve built, try to understand how they think. For example:

The answers usually reveal more than portfolios. They show whether the team can guide decisions or not. This is especially useful when figuring out how to choose the right partner to build AI EHR MVP without relying only on credentials.

Good teams build what you ask for. Strong teams question it first. You’ll notice a difference in how they approach the problem:

Some teams also bring experience with AI agent implementation, which becomes useful when the system needs to handle tasks or decisions automatically.

In the end, the right partner is not just someone who can build the product, but someone who can help you build the right version of it.

Start building an AI-Powered EHR MVP that fits real clinical workflows.

Build My MVP

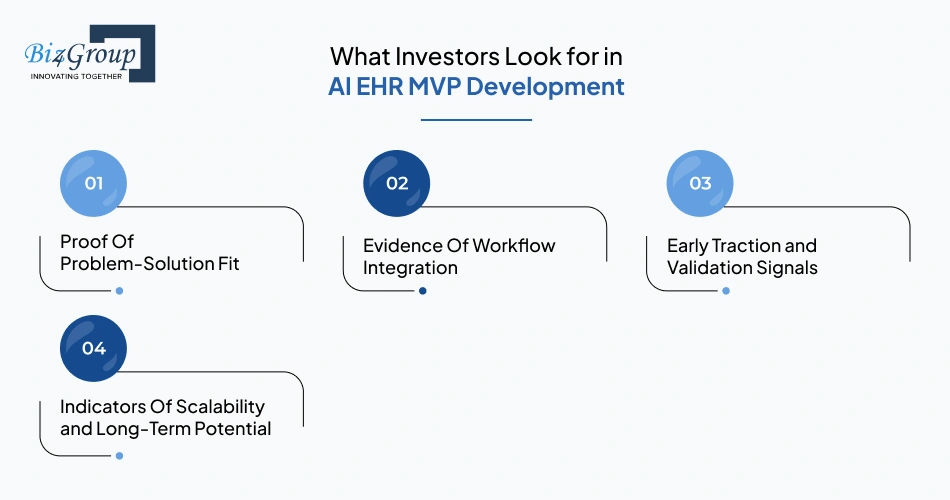

When investors look at early-stage products, they are not expecting a complete system. In AI EHR MVP development, they are trying to answer a simple question: is this worth building further? What matters most is whether the MVP shows real usage, fits into healthcare workflows, and has room to grow without starting over.

Investors want to see that you are solving something real, not just interesting. It helps if you can show where the problem shows up in day-to-day work, especially around patient data or documentation. When people talk about what investors look for in an AI EHR MVP, this is usually the first thing they try to understand.

A good product does not sit outside the workflow, it becomes part of it. Investors look for signs that your MVP fits into how clinicians or staff already work. If your product saves time or reduces effort without changing habits too much, that’s a strong signal.

You don’t need large numbers at this stage. Even small signs help, like users coming back, giving feedback, or relying on the product during their workflow. These signals show that the MVP is not just built, but actually used.

Investors also look ahead. They want to know if this can grow into something bigger without being rebuilt. That includes how your system handles data, how easily new workflows can be added, and whether the structure supports AI EHR MVP platform development over time.

Sometimes, teams also highlight how their product handles interaction and automation, similar to what you might see in an AI conversation app, especially if AI plays a visible role in the workflow.

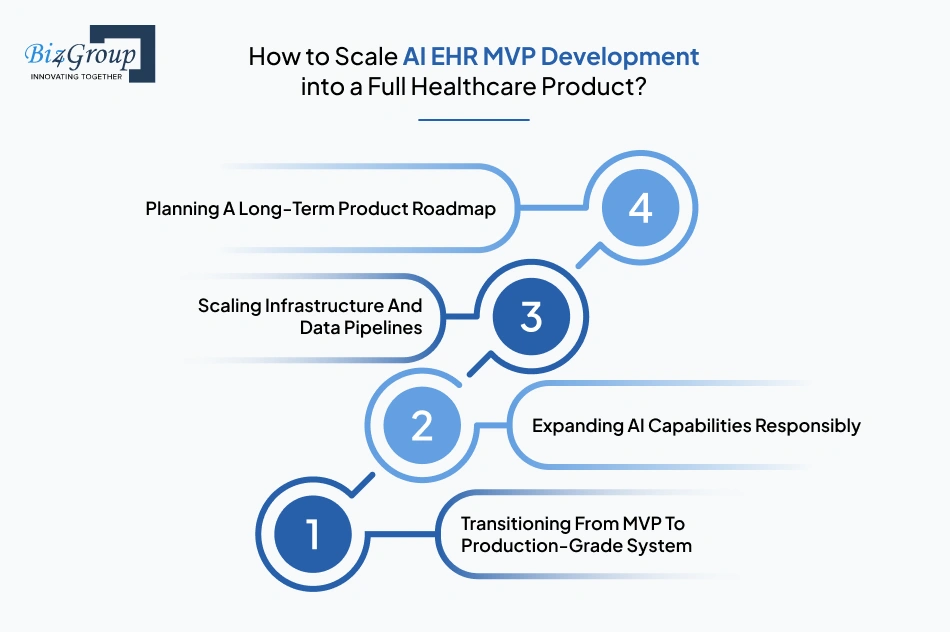

Once your MVP is working, the next question is simple: how do you grow this without breaking it? In AI EHR MVP development, scaling is less about adding more features and more about making sure the system can handle real usage, more users, more data, and more responsibility over time.

At the MVP stage, some rough edges are expected. But as you move forward, the system needs to become more stable and predictable. This means fixing issues that only show up with real usage, improving performance, and making sure the product works consistently across different scenarios.

It can be tempting to add more AI features once the MVP works, but that often creates more problems than value. A better approach is to improve what is already there. Many teams focus on refining existing outputs, especially when they understand what investors look for in an AI EHR MVP, which is usually consistency over complexity.

As more users start using the system, the pressure on data handling increases. What worked for a small group may not hold up under real load. This is where teams improve how data is stored, processed, and accessed, so the system stays responsive and reliable.

Scaling without a plan usually leads to scattered features and rework. A simple roadmap helps you decide what comes next and what can wait. It keeps the product focused and ensures that each step builds on the previous one, which is important in AI EHR MVP platform development.

Some teams also explore support from an AI chatbot development company when they start adding more interaction or automation layers, especially if communication becomes part of the workflow.

Scaling is all about doing the next right thing at the right time. When done carefully, the product grows without losing what made it useful in the first place.

AI EHR MVP development is about making a few clear decisions and staying disciplined. Teams that succeed usually focus on one workflow, keep the system simple, and avoid adding complexity before validating what works.

|

Area |

What To Do |

What To Avoid |

|---|---|---|

|

Scope |

Focus on one workflow that solves a real problem |

Trying to build multiple workflows at once |

|

Data |

Keep patient data structured and usable early |

Overcomplicating data models for future use |

|

AI Usage |

Apply AI where it clearly adds value |

Adding AI without a defined purpose |

|

Development Approach |

Build in small, steps that can be easily tested and verified |

Building everything before testing |

|

Decision Making |

Use real usage and feedback to guide changes |

Relying only on assumptions or planning |

Keeping things simple early makes everything easier later. Teams that stay focused move faster, spend less, and learn more, especially when building an EHR MVP integrating AI and gradually building an AI-Powered EHR MVP that can scale over time.

Plan ahead with a strong foundation for AI EHR MVP platform development and future scaling.

Plan My Product RoadmapBuilding an EHR MVP with AI requires more than just technical execution. It needs clarity in scope, understanding of healthcare workflows, and the ability to move fast without cutting corners. That’s where Biz4Group LLC comes in as an AI development company focused on practical, outcome-driven builds, especially in AI EHR MVP development.

We work with teams at the idea and MVP stage, helping them turn concepts into usable products that can be tested, validated, and scaled.

Here’s what sets us apart:

If you’re looking to move from idea to a working product with clarity and control, Biz4Group LLC provides the structure and expertise to get there.

Building an AI-powered EHR doesn’t have to feel like solving a puzzle with missing pieces. The process becomes much clearer once you focus on one workflow, keep the scope tight, and let real usage guide what comes next. That’s really what this entire guide comes down to.

The teams that succeed in this space are not the ones that build the most features first. They are the ones that build the right thing first, test it early, and improve it step by step. That’s the core of AI EHR MVP development.

And yes, while the goal is to build AI software that can scale and evolve, the real win is getting that first version right enough to learn from.

Ready to move from concept to a working AI-powered EHR MVP? Let’s build it together.

Yes, an MVP does not require large-scale datasets. It can start with limited, structured data or even simulated datasets to test workflows. The focus is on validating functionality, not training highly complex AI models at the initial stage.

Early-stage MVPs often use anonymized, synthetic, or restricted datasets to reduce risk. Access is typically limited to specific users, and basic safeguards like encryption and role-based access are implemented from the beginning.

Not always. Many MVPs are tested in controlled environments or internal settings where full regulatory approval may not be required. However, compliance considerations should still be built into the system from the start to avoid rework later.

Most MVPs use simpler approaches such as rule-based logic, classification models, or basic natural language processing. Complex models are usually introduced later, once the workflow and data quality are validated.

The cost usually ranges between $30,000 and $100,000+, depending on scope, data complexity, and AI features. A focused MVP with one workflow will cost less, while adding more workflows or advanced AI capabilities increases the overall investment.

Yes, but it depends on how the MVP is designed. If the data structure and architecture follow common standards, integration can be added later without major changes. This is why early system design decisions are important.

with Biz4Group today!

Our website require some cookies to function properly. Read our privacy policy to know more.