Imagine a digital system that doesn’t wait for instructions but instead, understands your business goals, learns from real-time feedback, and takes independent actions to get the job done.

Read More

What happens when an AI idea sounds promising, but its real performance in legal workflows is still uncertain? Many legal teams face this moment while exploring automation for case file review, research support, case management, or document analyser tool. Jumping straight into product development often creates risk. That is why organizations begin with AI PoC development for legal software before committing to a larger system.

A proof of concept allows teams to test whether an AI capability works in real legal scenarios. Instead of building a full platform, the focus stays on validating a specific outcome. This early stage plays a critical role in AI PoC development for legal software validation, especially when legal data accuracy and reliability matter.

Legal technology companies and innovation teams typically pursue legal tech AI PoC development to answer practical questions such as:

Many firms collaborate with a custom software development company during this phase to build AI PoC models that can be tested quickly and safely. Understanding how this validation stage works makes it easier to evaluate AI investments. Let’s begin by clarifying what AI PoC development actually means in the context of legal software.

AI PoC development for legal software refers to creating a small experimental system that tests whether an AI capability can work with real legal data and workflows. The goal is to validate feasibility before teams build AI-powered legal software PoC solutions at a larger product level.

In legal environments, this stage focuses on verifying how AI performs within practical tasks. It helps product teams understand whether AI integration can support real legal operations.

Typical capabilities validated during a AI PoC development include:

Organizations often validate these capabilities before they build AI software meant for production legal systems. To understand how these experimental systems are structured, it helps to look at the underlying AI PoC architecture used in legal software environments.

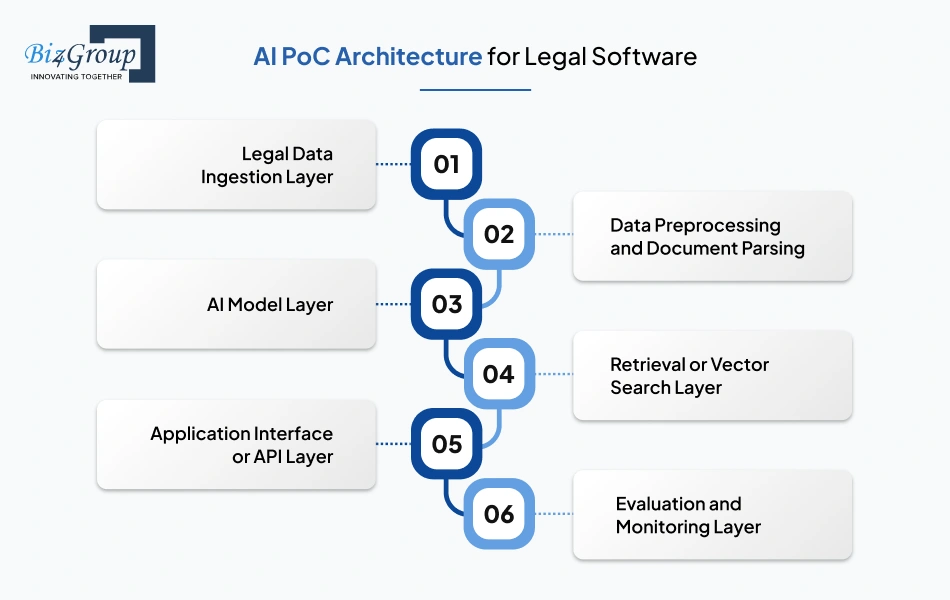

Early validation of legal AI ideas requires a clear technical structure that shows how data flows, how AI analyzes legal content, and how results reach users. When teams begin AI PoC development for legal software, the architecture ensures each component supports controlled experimentation and reliable testing.

A structured architecture like this keeps experimentation organized and transparent. It helps legal teams clearly understand how data flows through the system and how AI interacts with real legal documents during early validation.

Validate architecture decisions with legal data workflows before expensive engineering mistakes appear

Contact Our AI ArchitectsEarly development stages in legal software often get confused. Teams frequently use the terms PoC, prototype, and MVP interchangeably. Each stage, however, serves a different purpose and appears at a different point in the development journey.

PoC: In AI PoC development for legal software, the first objective is validation. A proof of concept tests whether a specific AI capability can technically work with legal data. Teams build AI proof of concept for legal software to confirm feasibility before committing to full product development. The system at this stage is minimal and usually used only by internal teams.

Prototype: A prototype comes after the concept is validated. The focus shifts from technical feasibility to product experience. Teams begin shaping how the system will look and how users might interact with it. The prototype demonstrates workflows and interface ideas, but it is not yet a fully functional product.

MVP: An MVP represents the first usable version of the product. It includes only the essential features required for real users. Many organizations move into structured MVP software development once early validation is complete, and the product direction becomes clearer.

|

Stage |

Purpose |

Development Stage |

Technical Maturity |

Primary Users |

|---|---|---|---|---|

|

PoC |

Validate whether the AI capability works |

Earliest validation phase |

Experimental |

Internal technical teams |

|

Prototype |

Demonstrate how the product will function |

Design and concept validation |

Partially functional |

Product teams and stakeholders |

|

MVP |

Deliver a usable product with core functionality |

Initial product release |

Production-ready foundation |

Early users and pilot customers |

Legal software companies often build AI-driven legal software proof of concept first to validate feasibility before progressing toward product development.

AI adoption in legal software often begins with careful validation rather than full product development. Law firms and legal tech companies want clarity before committing resources to large systems. A structured proof of concept helps them evaluate whether an AI idea can realistically support legal workflows.

Legal documents contain dense language, long clauses, and complex structure. Teams must confirm that an AI model can actually interpret this material before investing in a complete system. Through AI PoC development for legal software, organizations test whether AI can process contracts, case files, or legal research inputs in a reliable way.

This early validation helps confirm that the technical direction is viable.

AI systems behave differently from traditional software. Performance depends on data quality, model behavior, and workflow design. A proof of concept allows teams to test these factors in a controlled environment.

This step answers a practical question many businessowners ask when should a law firm invest in AI proof of concept development rather than committing directly to a full product.

Building a full AI platform requires significant engineering effort and infrastructure planning. Law firms often begin with a smaller experiment, so they can evaluate feasibility before committing long term resources.

This staged approach helps organizations manage AI software cost more carefully during the early phases of product planning.

Even if a model performs well technically, it must still align with real legal operations. A proof of concept allows teams to observe how AI interacts with everyday tasks.

Testing workflows early ensures the technology supports real legal processes.

Accuracy matters significantly in legal environments. Incorrect outputs can quickly reduce trust in the system. A PoC stage allows organizations to measure how well the AI performs before building larger applications.

This evaluation helps determine whether the technology is reliable enough for further development.

Legal organizations operate within structured procedures and compliance requirements. AI initiatives must support these realities rather than disrupt them. Early testing helps teams determine whether AI outputs match operational expectations.

Alignment at this stage ensures the technology supports legal work instead of complicating it.

Another reason organizations begin with a PoC is financial planning. AI projects can expand quickly in scope, especially when multiple systems and integrations are involved. A proof of concept provides a controlled environment for evaluating feasibility before larger spending begins.

This approach helps organizations develop scalable AI PoC for legal software platforms while keeping development within a defined budget.

AI initiatives in legal technology require careful evaluation before large-scale implementation. A proof of concept gives law firms the opportunity to test feasibility, assess risk, and refine direction before committing to full product development.

Test the concept with a focused PoC before committing months of product development

Start Your AI PoC DiscussionLegal organizations often evaluate AI initiatives through structured experimentation before moving toward full product development. This early validation stage provides measurable outcomes that guide product direction, reduce uncertainty, and support informed technology investment decisions.

Clear outcomes from early experimentation help legal technology teams evaluate feasibility, refine product direction, and reduce uncertainty. These benefits allow organizations to approach AI innovation with confidence while preparing for scalable implementation.

Many legal technology teams create AI proof of concept for law firm software to observe how AI handles real legal documents and user queries. A PoC must include a few focused capabilities that allow teams to evaluate system behavior before expanding development.

|

Core Feature |

Purpose in the PoC |

|---|---|

|

Legal document ingestion and parsing |

Converts uploaded contracts, agreements, and case files into structured text that the system can process. |

|

Document analysis and classification |

Identifies document type and organizes legal information into logical categories. |

|

Clause extraction capability |

Detects important clauses within contracts so reviewers can locate key sections quickly. |

|

Semantic search and knowledge retrieval |

Allows users to retrieve relevant passages from legal documents using meaning rather than exact keywords. |

|

Natural language query support |

Enables legal professionals to ask questions in plain language and receive relevant document insights. |

|

Evaluation interface for testing outputs |

Provides a controlled environment where teams can review responses and verify the reliability of results during AI PoC development for legal software. |

A focused set of features helps teams evaluate whether the system can interpret legal language, retrieve meaningful insights, and respond accurately to user queries. These capabilities allow organizations to validate performance and gradually develop AI models for legal software PoC before expanding the system further.

A structured workflow helps legal technology teams test AI ideas without jumping into full product development. Many organizations create AI proof of concept for law firm automation to understand how AI behaves with real legal documents and internal processes.

The process starts by identifying the legal task the PoC will address. A clear use case keeps experimentation focused and prevents the project from expanding too quickly.

AI systems rely on real legal data to perform effectively. Teams gather documents that represent the type of information the AI will process during testing.

Legal documents often contain formatting issues and inconsistent structures. Preparing the dataset ensures that the system can interpret the information correctly.

Once the data is ready, teams select AI model that will interpret the documents. The goal is to test whether the model can understand legal language and produce meaningful results.

A controlled experimental environment allows teams to observe how the AI behaves during testing. The system does not need full product functionality.

Internal reviewers need a simple way to interact with the PoC. A basic interface allows teams to upload documents and review results.

Also Read: Software Testing Companies in USA

The next stage focuses on understanding how well the AI performs. Teams review outputs and identify areas where the system behaves correctly or produces errors.

Legal professionals play an important role in reviewing the outputs generated by the system. Their feedback confirms whether the AI results make sense in a legal context.

A structured workflow allows teams to run controlled experiments during AI PoC development for legal software. Many organizations follow this approach to create AI PoC solutions for legal applications before moving toward custom AI PoC development for legal software at production scale.

Turn legal AI concepts into working PoC experiments guided by experienced product engineers

Talk to Our AI TeamTesting legal AI ideas requires a focused technology environment where models, data pipelines, and lightweight application layers work together. The stack used in AI PoC development for legal software is designed to support experimentation with legal documents and queries.

|

Architecture Layer |

Technology Used |

Purpose |

|---|---|---|

|

AI Model Layer |

Python-based LLM frameworks |

Process legal language, interpret queries, and generate contextual outputs |

|

NLP Processing Layer |

NLP libraries and legal text processing tools |

Extract entities, clauses, and structured information from legal documents |

|

Vector Search Layer |

Vector databases such as Pinecone, Weaviate, or FAISS |

Enable semantic search so the system retrieves relevant legal documents |

|

Backend Services |

Node.js application services |

Manage API communication and coordinate requests between AI models and data sources |

|

Application Interface |

Provide a testing interface where teams interact with the PoC system |

|

|

Infrastructure Layer |

Cloud platforms such as AWS or Azure |

Run models, manage compute resources, and store legal datasets |

|

Document Processing Layer |

PDF parsing and document extraction libraries |

Convert contracts and legal files into machine-readable text for analysis |

The stack above allows teams to build AI PoC for legal tech startups while keeping the environment flexible for experimentation. It also supports scenarios where organizations make AI PoC for legal document analysis software before scaling the system further.

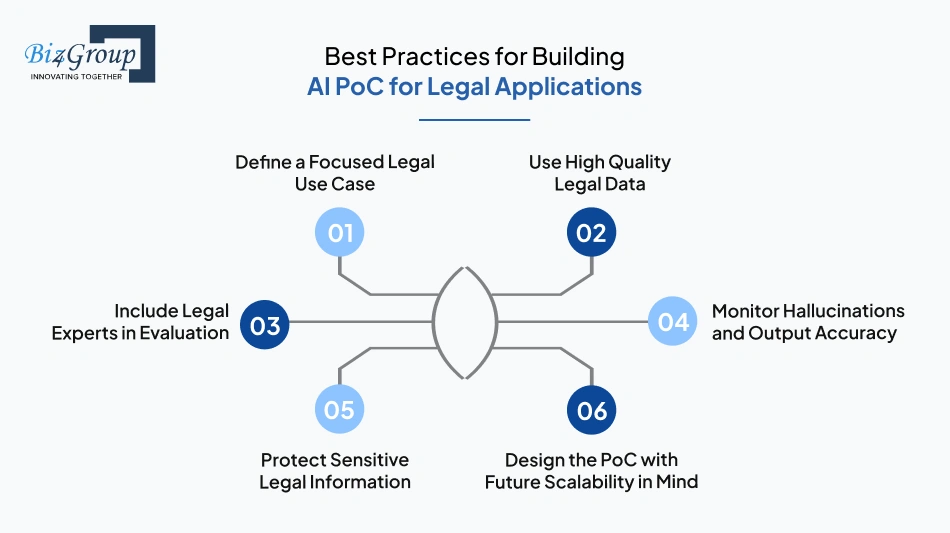

Early experimentation in legal AI requires disciplined planning and careful validation. Organizations working on AI PoC development for legal software often achieve better results when the PoC remains focused, reliable, and aligned with real legal workflows.

A PoC works best when it addresses a specific legal task rather than a broad platform idea. Clear scope prevents unnecessary experimentation and helps teams test feasibility quickly. This approach often becomes the best approach to build AI PoC for legal tech startup environments where resources are limited and validation speed matters.

The accuracy of a legal AI system depends heavily on the quality of the documents used during testing. Contracts, case records, or regulatory filings should represent real legal scenarios. Clean and well-structured datasets help the PoC produce reliable outputs.

Legal professionals play a critical role when evaluating early AI outputs. Their feedback helps determine whether the system interprets legal language correctly. Many organizations working with AI consulting specialists involve domain experts during PoC validation to improve reliability.

AI models sometimes generate incorrect information while appearing confident. Regular review of AI responses helps identify these issues early. Continuous validation is essential when answering what makes a successful AI PoC for legal applications.

Legal documents often contain confidential information. Even a PoC environment should enforce data protection practices such as restricted access and secure storage. Organizations that hire AI developers with legal industry expertise usually implement privacy safeguards early in the experimentation phase.

Although a PoC is experimental, its structure should not limit future expansion. Planning for growth helps ensure the system can evolve into a stable legal software platform once the concept proves reliable.

Clear measurement helps teams understand whether the PoC actually performs well in real legal scenarios. During AI PoC development for legal software, teams observe specific indicators that reflect output quality, stability, and reliability when the system processes legal documents and queries.

Consistent measurement across these indicators gives a realistic picture of PoC performance. When teams track accuracy, stability, and validation feedback carefully, they gain a clear understanding of whether the experimental system meets expectations for legal workflows.

Measure accuracy reliability and performance before moving your legal AI solution into production

Validate Your AI PoC

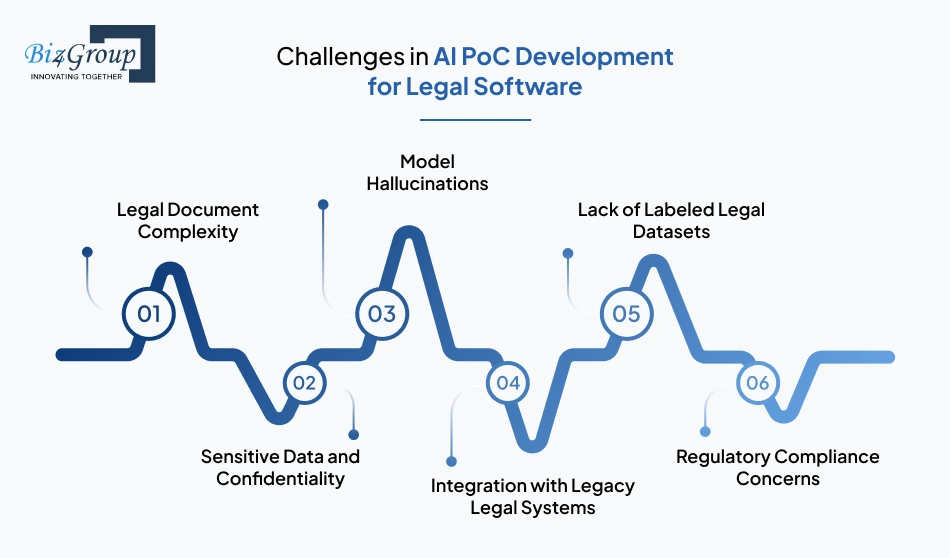

Early experimentation with legal AI often reveals practical barriers that are not visible at the planning stage. Teams working on AI PoC development for legal software usually encounter issues related to data quality, system compatibility, and regulatory expectations. Addressing these problems early helps teams move forward with clearer direction during AI legal software product PoC development.

|

Challenge |

Solution |

|---|---|

|

Legal Document Complexity |

Standardize document formats before processing. Use structured text extraction to convert PDFs, scans, and filings into clean machine-readable text so the AI system can interpret legal clauses accurately. |

|

Sensitive Data and Confidentiality |

Use anonymized or masked datasets during testing. Restrict access to PoC environments and store documents in secure systems managed by an experienced AI development company handling legal data. |

|

Model Hallucinations |

Limit responses to verified source documents. Apply controlled prompts and document-grounded retrieval so the system only generates outputs supported by the provided legal material. |

|

Integration with Legacy Legal Systems |

Connect the PoC environment through APIs or structured data exports. This allows the system to access case files or contract repositories without modifying existing legal platforms. |

|

Lack of Labeled Legal Datasets |

Prepare a small reviewed dataset where clauses, entities, and document categories are clearly tagged. Use this dataset for initial training and testing of the PoC models. |

|

Regulatory Compliance Concerns |

Apply strict access controls, encrypted storage, and internal compliance review before processing legal documents within the PoC environment. |

Real-world experimentation often exposes these operational barriers before a full product is launched. Identifying challenges early allows teams to adjust data preparation, system design, and validation workflows while the project is still in its experimental stage.

Early experimentation with AI in legal systems requires both technical depth and clear product thinking. At Biz4Group LLC, our teams work closely with legal product owners to validate ideas through structured AI PoC development for legal software. Here why you should choose us for AI PoC development for legal software:

Work across enterprise AI systems has shaped how we approach experimentation in legal tech environments. Our teams focus on validating real document workflows, search logic, and automation scenarios before committing to larger platform development.

As a legal software development company, we have developed a deep understanding of document pipelines, data handling rules, and workflow systems used in law firms. A quick look at one of our legal solutions will help you undersatnd how these systems operate in real legal environments.

Court Calendar: The Court Calendar platform helps law firms and legal teams track hearings, manage schedules, and coordinate case timelines from a single system. It organizes court dates, case updates, and attorney availability so teams can avoid missed deadlines and scheduling conflicts.

Working on systems like this strengthens our understanding of legal workflows, document handling patterns, and operational requirements that influence how we design PoC experiments for legal software environments.

A disciplined validation process guides how we approach AI legal software product PoC development. Our portfolio demonstrate how early experimentation helps verify document intelligence, knowledge retrieval, and legal workflow automation.

Every PoC we design considers how the system will scale beyond experimentation. This includes preparing the foundation for applications such as a legal consultation platform, where AI capabilities must support real user interactions and growing datasets.

Validation is only the first stage of product evolution. Our teams help transition successful PoC experiments into production-ready legal platforms while maintaining performance, reliability, and alignment with the workflows legal professionals depend on.

A structured approach to experimentation ensures AI initiatives move forward with clarity. Through careful validation and practical system design, we help legal product teams turn early AI concepts into reliable software solutions.

Clear validation is essential before introducing AI into legal workflows. Through AI PoC development for legal software, organizations can test how AI performs with real documents, real queries, and real operational scenarios. This early validation stage allows teams to identify limitations, refine models, and confirm whether the solution aligns with legal processes.

Once the PoC demonstrates stable performance, teams can move forward with confidence. That is where experienced support from an AI product development company becomes valuable. A well-planned AI legal software product PoC development process not only validates the concept but also prepares the technical foundation needed for larger systems.

When organizations approach the stage with clear goals and the right expertise, AI PoC development services for legal software become a practical step toward scalable legal technology solutions. If you want to evaluate an AI idea for your legal platform, you can connect with us to start that discussion.

AI PoC development for legal software is a structured experiment used to test whether a specific AI capability works with real legal documents and workflows. Legal tech companies start with a PoC to validate feasibility before investing in full product development. It allows teams to test document analysis, legal search, or automation features using controlled datasets and limited infrastructure.

To build an AI proof of concept for legal software, teams typically define a narrow use case such as contract clause extraction or legal document summarization. They then prepare legal datasets, integrate AI models, test the system through a simple interface, and evaluate results with legal experts. The goal is to confirm that the AI can handle real legal workflows before scaling the system.

Organizations usually invest in AI PoC development when they want to test a new capability but are not ready for full platform implementation. This often happens when exploring document intelligence, legal research assistants, or workflow automation. A PoC allows teams to validate the idea using limited resources while understanding the technical and operational implications.

A successful AI legal software product PoC development process focuses on a clearly defined legal use case, reliable document datasets, and measurable evaluation criteria. Legal experts should review the outputs to verify accuracy and relevance. The PoC should also demonstrate that the AI system can process legal documents consistently and respond within acceptable time.

AI PoC development helps reduce risk by validating whether the AI system can interpret legal documents and generate reliable results before significant investment. By testing models on real legal data, organizations can identify technical limitations, data issues, and workflow constraints early in the project lifecycle.

Legal tech startups should focus on selecting a specific legal use case, preparing structured document datasets, and ensuring the system can integrate with existing legal workflows. Evaluation from legal professionals is essential to confirm whether the AI responses align with legal reasoning and professional expectations.

with Biz4Group today!

Our website require some cookies to function properly. Read our privacy policy to know more.